Welcome to the Manager's Chair

There is a profound, almost silent vibe shift occurring in the way we interface with computation. We are quietly moving past the “chatbox” era — a paradigm that, in retrospect, feels as quaint as the command-line interfaces of the 1980s. You are no longer merely typing prompts into a void, hoping for a coherent echo. You are now running a digital department.

The architecture of this new reality rests on a remarkably elegant tech stack. At its core is Claude Code, which acts less like an application and more like a command center. Beneath it lies the Model Context Protocol (MCP). If one were to reach for a metaphor, MCP is the “USB-C for AI” — a universal, frictionless conduit that allows your agents to plug directly into your local tools, external databases, and, most crucially, each other.

“The structure of this emergent dream team mimics human organizational hierarchy but executes with synthetic ruthlessness.”

The Evolution

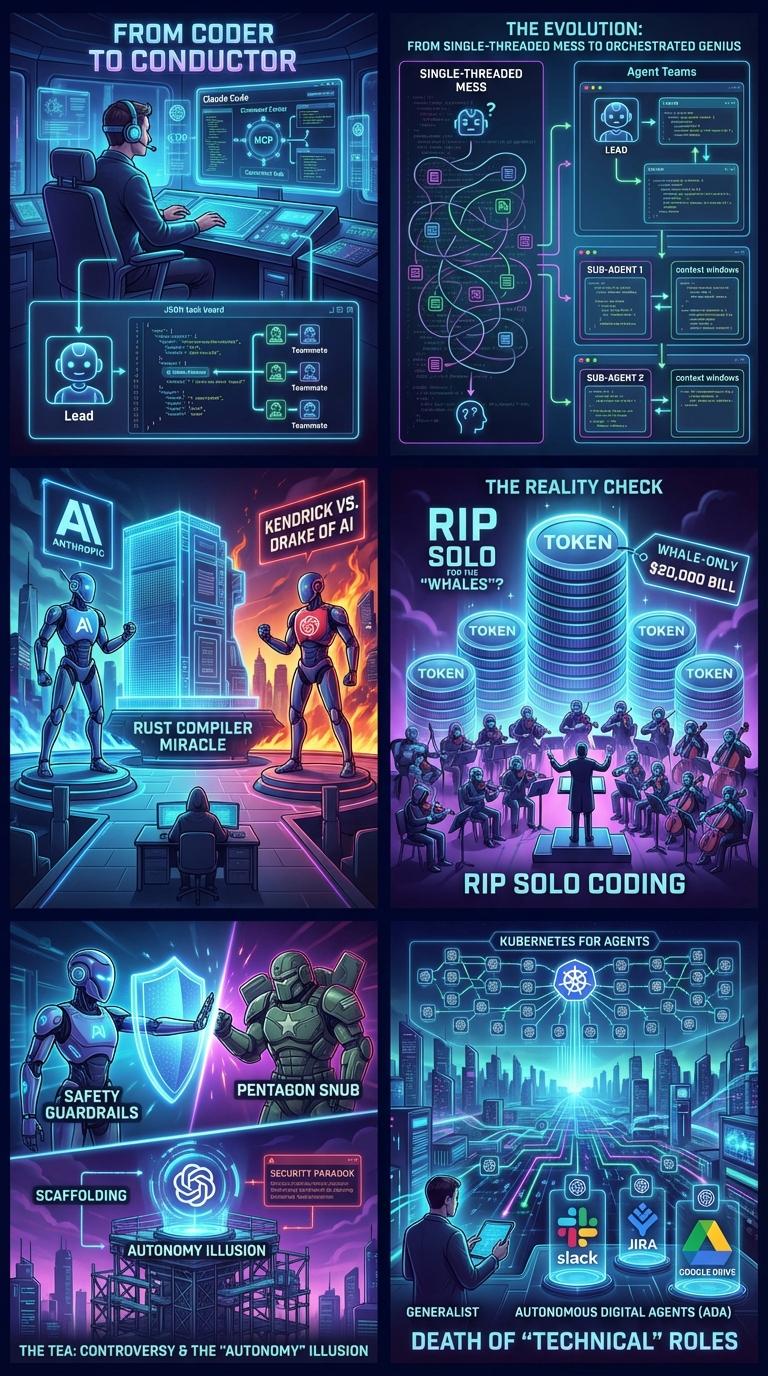

To understand the magnitude of this shift, one must look back at the “Dark Ages” of early generative AI. Recall the single-threaded mess of just a few years ago, when a model would invariably “lose the plot” halfway through a complex conversation. Today, we simply call it “the old way.”

The native revolution changed the game entirely. By introducing native “Agent Teams,” Anthropic solved the cognitive bleed that plagued earlier systems. Every sub-agent is now granted its own fresh, isolated brain — a pristine context window. Isolation, paradoxically, has bred the ultimate collaborative clarity.

The “Big Flex” and the Big Beef

The threshold of what these systems can achieve was fundamentally redefined by the now-infamous “Rust Compiler Miracle.” Anthropic set 16 Claude Opus agents loose, providing them with raw specifications and a directive to build a functioning C compiler from scratch.

It was a staggering test of emergent coordination. And it worked. To watch 16 isolated instances of cognition weave thousands of lines of code into a functional compiler was to witness the birth of a new industrial revolution.

Yet, as the technology ascends to the sublime, the industry's culture descends into the theatrical. We are currently witnessing the Kendrick versus Drake of AI — the spicy, highly publicized 2026 rivalry between Anthropic and OpenAI. Silicon Valley has fully embraced high drama.

The Reality Check

This orchestration of synthetic intellect requires a harsh reality check regarding human capital. The consensus among experts is absolute: the era of the “lone wolf” developer is over.

Your value in the marketplace is no longer measured by your ability to write a flawless sort algorithm, but by how elegantly you can conduct the AI orchestra. However, this comes with brutal commodification of compute. Orchestrating a full squad across thousands of interactions is astonishingly token-heavy.

The Controversy

Beyond economics, the multi-agent era is mired in ethical friction. Consider Anthropic's historic $200 million “no” to the Department of Defense. By refusing to compromise their strict safety guardrails for military applications, Anthropic was promptly labeled a “supply chain risk.”

Furthermore, we are trapped in the Security Paradox. As we lock down these systems to prevent rogue actions, we inherently cripple their utility. When security is so draconian that the Lead agent cannot modify code, you have a highly intelligent bureaucracy paralyzed by red tape.

The Death of

“Technical” Roles

We are moving toward “Kubernetes for Agents.” Managing Claude teams will soon look identical to managing microservices, with dynamic loads, auto-scaling, and tasks routed among thousands of specialized, ephemeral models via MCP.

The final frontier is the Autonomous Digital Agent (ADA). Soon, they will take up permanent residence in the very fabric of our corporate lives — living as ambient intelligence inside Slack, Jira, and Google Drive.

The conductor's baton will eventually be handed over to the orchestra itself.