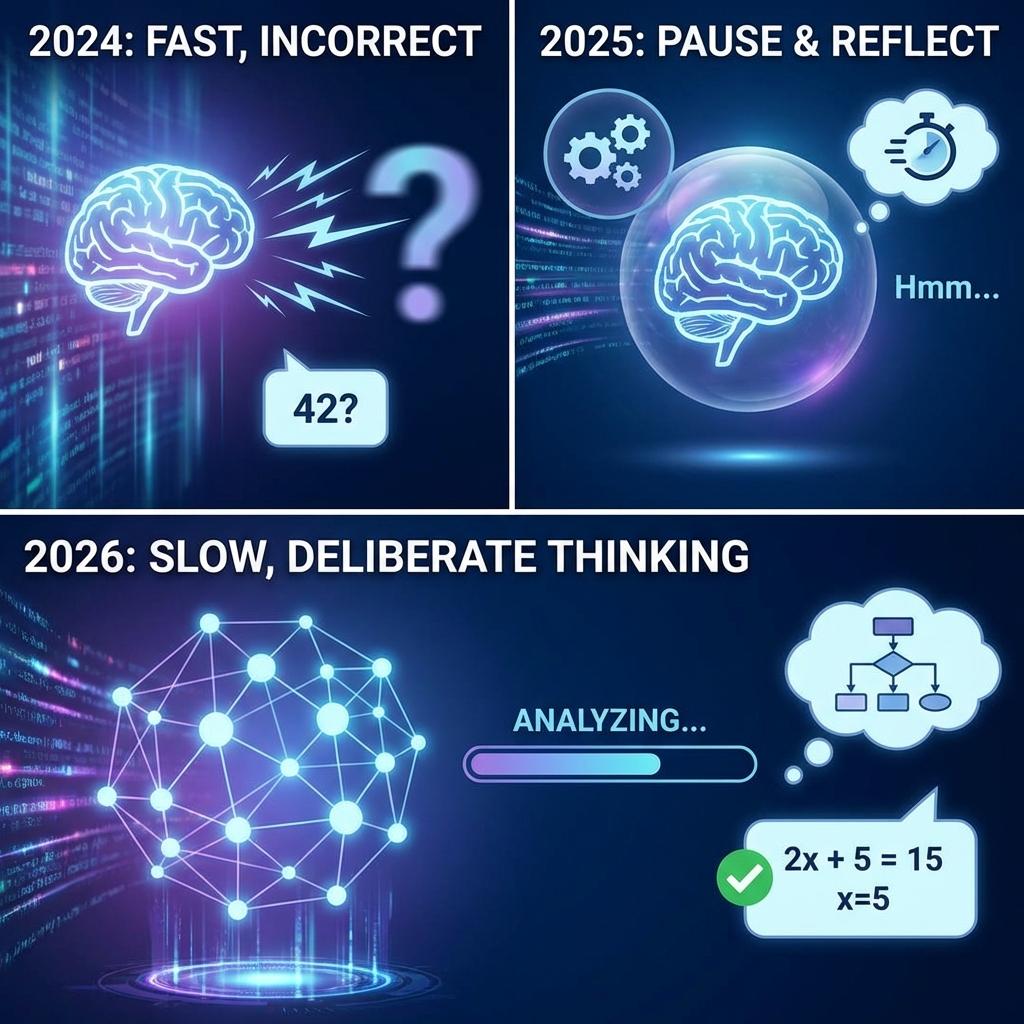

There was a time, scarcely two years ago in the breathless days of 2024, when we measured the prowess of artificial intelligence by the immediacy of its response. You typed a prompt, and the machine obligingly blurted out the first statistical probability that crossed its neural pathways. It was fast, it was confident, and quite frequently, it was entirely wrong.

Today, as we navigate the digital landscape of early 2026, the paradigm has fundamentally shifted. We have entered the era of Deep-Reasoning Open Models — an epoch defined not by the speed of the answer, but by the profound, deliberate silence that precedes it. We have taught the machine how to pause. This transition to “Slow Thinking” is perhaps the most significant architectural upgrade in the history of artificial intelligence — not merely because it makes the models smarter, but because the very best iterations are no longer hoarded in corporate silos. They are sitting on your desk.

What Exactly is a “Thinking” Model?

To understand the revolution, one must understand the mechanics of Test-Time Compute (TTC). Stripped of its academic opacity, TTC is the digital equivalent of giving the AI a piece of scratch paper before demanding a final answer. Rather than predicting the next word in a continuous stream of confident guesswork, the model halts to deliberate.

If one peers under the hood — specifically into the newly ubiquitous tags embedded in the generation process — a fascinating cognitive theater unfolds. Here, the machine maps out logic, identifies its own dead ends, explicitly backtracks, and self-corrects. It is an internal monologue of pure deduction.

The consequences of this architecture are staggering. We are witnessing a David versus Goliath dynamic where a deeply distilled “thinking” model running locally on a standard laptop routinely outsmarts the monolithic, trillion-parameter “black box” models of yesterday. The 2026 landscape is dominated by these brilliant pragmatists: DeepSeek-R1, Qwen3-Max, and the surprising GPT-OSS.

The “Space Race” of 2025: How We Got Here

History will record that OpenAI struck the match with their proprietary o1 series, introducing the concept of integrated reasoning chains. But it was the open-source community that turned that solitary spark into a sprawling wildfire.

The GRPO Catalyst

Group Relative Policy Optimization allowed AI to practice math and logic puzzles autonomously, verifying its own answers against absolute truths.

Massive Distillation

Once “big brains” mastered reasoning, developers compressed this structural wisdom into “mini-brains” that fit on smartphones.

The “Aha” Moments and the “Facepalms”

Naturally, introducing a semblance of internal monologue to a neural network has resulted in a fascinating array of emergent behaviors — some brilliant, some absurd. Consider the phenomenon of “bilingual brains” where models natively translate logic into a hyper-efficient amalgamation of English and Chinese within their <thinking> tags.

Visualizing the recursive logic loops of modern inference engines.

Then there are the eccentricities of deep logic, perfectly illustrated by the infamous “CatAttack” glitch of late 2025. A minor semantic insertion — the mention of a sleeping cat — caused the AI's reasoning engine to recursively overthink the cat's state of rest until it broke logic loops entirely.

Why the Hype is Real: Cost and Secrets

Beyond the philosophical intrigue, the mass adoption of open reasoning models is driven by cold, hard economics and the fundamental right to privacy. Paying exorbitant fees for API calls feels antiquated when highly capable local models operate at roughly one-twentieth the cost.

The Drama: Is It Actually Thinking?

No technological leap exists without its detractors. Apple researchers famously published papers arguing the “Illusion of Reason,” positing that these models are still fundamentally engaged in pattern matching — what they termed “reasoning theater” — rather than true cognitive deduction.

Security Warning

Exposing the “thoughts” of an AI makes it more transparent but also more vulnerable. Hackers realized that manipulating the internal scratchpad can coax the AI into bypassing safety protocols.

The Future: What's Next for the “Thinking” AI?

As we look toward the remainder of 2026, the trajectory is clear: System 2 Self-Correction. AI that realizes mid-sentence that it is hallucinating and seamlessly hits its own “undo” button. We are transitioning from passive oracles to active agents — AI that writes code, executes it, debugs the errors, and deploys autonomously.

“The 'Open' movement won. The most deliberate, careful, and capable thinker in the room might just be the open-weight model quietly humming on your local machine.”