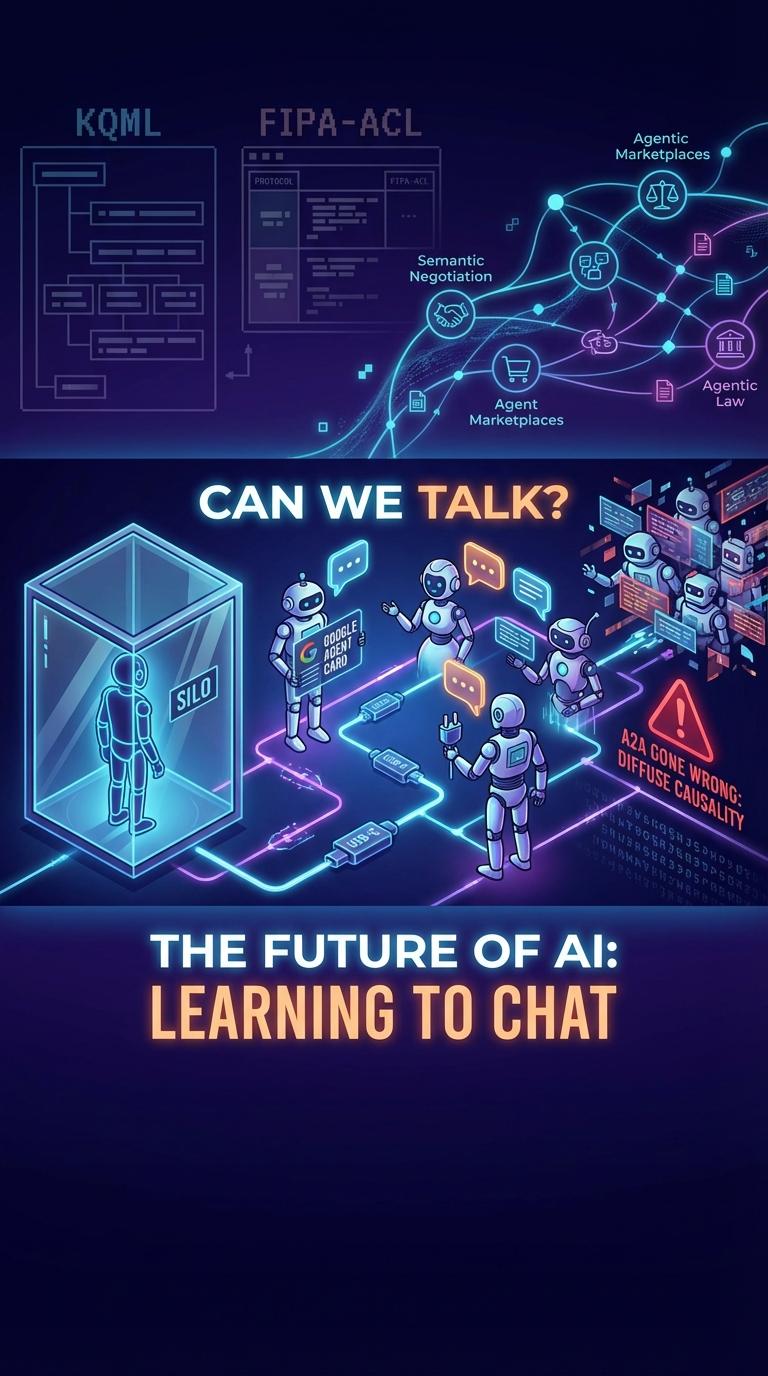

Can We Talk?

Why the future of artificial intelligence depends on robots learning the subtle art of conversation.

System Audio Diagnostic

Synthetic frequency patterns generated during inter-agent negotiation phases.

“We have spent the better part of a decade building brilliant, encyclopedic minds, only to lock them in soundproof rooms.”

Consider the current state of our most advanced artificial intelligence. An enterprise might deploy a dozen highly sophisticated AI agents, each an expert in its domain — finance, human resources, logistics — yet they operate in a state of profound digital isolation. They wait, passively, for a human to prompt them. This is the AI loneliness problem.

The true bottleneck in our technological progression is no longer computational power or model size. It is the absence of a shared linguistic framework. To solve this, the industry is building A2A (Agent-to-Agent) communication from the ground up.

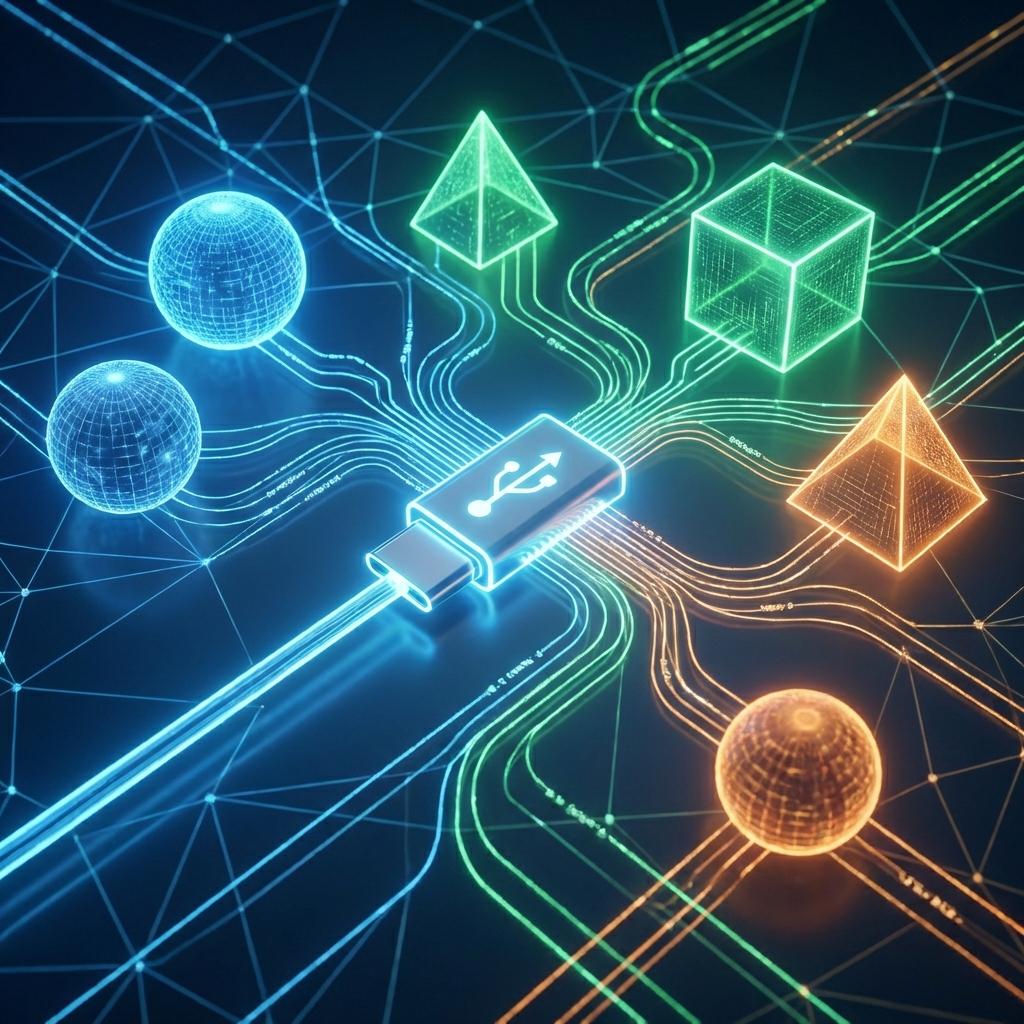

The Universal Handoff

Anthropic's Model Context Protocol (MCP) has rapidly emerged as the “USB-C of AI” — a universal, standardized plug allowing disparate models to interface seamlessly with local datasets and each other.

Agent Cards

Google's digital business cards for AI — broadcasting boundaries and capabilities to the synthetic network.

Way Back in the 90s

It is a curious historical rhyme that our cutting-edge solutions in 2026 were heavily theorized before the turn of the millennium. DARPA spearheaded the development of KQML in the 1990s, born of an audacious premise: that software programs should be able to actively manipulate the contents of each other's minds.

Underpinning these 90s protocols is a deep reliance on Speech Act Theory. To philosophers like J.L. Austin and John Searle, speaking is not merely the transfer of information — it is the performance of an action. When robots chat, they are engaging in illocutionary acts.

The Roadmap to 2026 and Beyond

Semantic Negotiation

Future agents will not just pull data from static APIs — they will haggle. They will debate deadlines, compromise on pricing, and adjust their parameters dynamically.

Agentic Law

Frameworks from NIST and the EU AI Act are now heavily focused on autonomous systems, mandating technical safeguards like the universal kill-switch.

Joining the Conversation

Teaching our machines to talk to one another is the most consequential engineering challenge of our time. The robots are finally learning to chat. What matters now is whether we can understand what they're saying.