Introduction: The Death of the Drag-and-Drop

There is a distinct, agonizing memory shared by almost anyone who has ever touched interface design: spending hours nudging a blue rectangle three pixels to the left, then two to the right, desperately seeking a visual equilibrium that always seemed just out of reach. Those days are now numbered.

Enter Google Stitch. It is less a software update and more a fundamental rewiring of how we conceive of digital spaces. Stitch is Google's new AI powerhouse — an engine that takes abstract human intentions, often vaguely described as "vibes," and conversational voice commands, transmuting them into fully functional, deployable applications.

It is this underlying architecture that provides the semantic reasoning necessary to understand not just what a button looks like, but what it means in the context of human interaction — making the so-called magic happen with unnerving precision.

From Secret Startups to "Vibe Design"

To understand Stitch, we must trace its lineage back to Google's quiet, yet strategic, acquisition of Galileo AI. That startup laid the foundational bedrock, proving that large language models could interpret UI constraints.

When Stitch first emerged in 2025, it was viewed as a fascinating toy — a rapid prototyping tool that saved a few hours of wireframing. But the March 2026 update fundamentally changed the paradigm. It crossed the threshold from novelty to utility.

We are witnessing the death of the Cartesian grid and the birth of "Vibe Design." Instead of dragging a drop-down menu onto a canvas, a creator now articulates the feeling and the teleological goal of the application. "Make it feel like a minimalist apothecary, but optimize for rapid checkout." The machine synthesizes the mood and the mechanics simultaneously.

The "Cool Factor": Features You'll Actually Use

The most striking interaction within Stitch is the multimodal dialogue you engage in with your canvas. You no longer merely point and click — you converse. Through voice commands, you can dictate spatial logic and color theory in real-time, watching the interface morph as if it were a living, breathing organism.

The new multimodal voice-driven interface canvas.

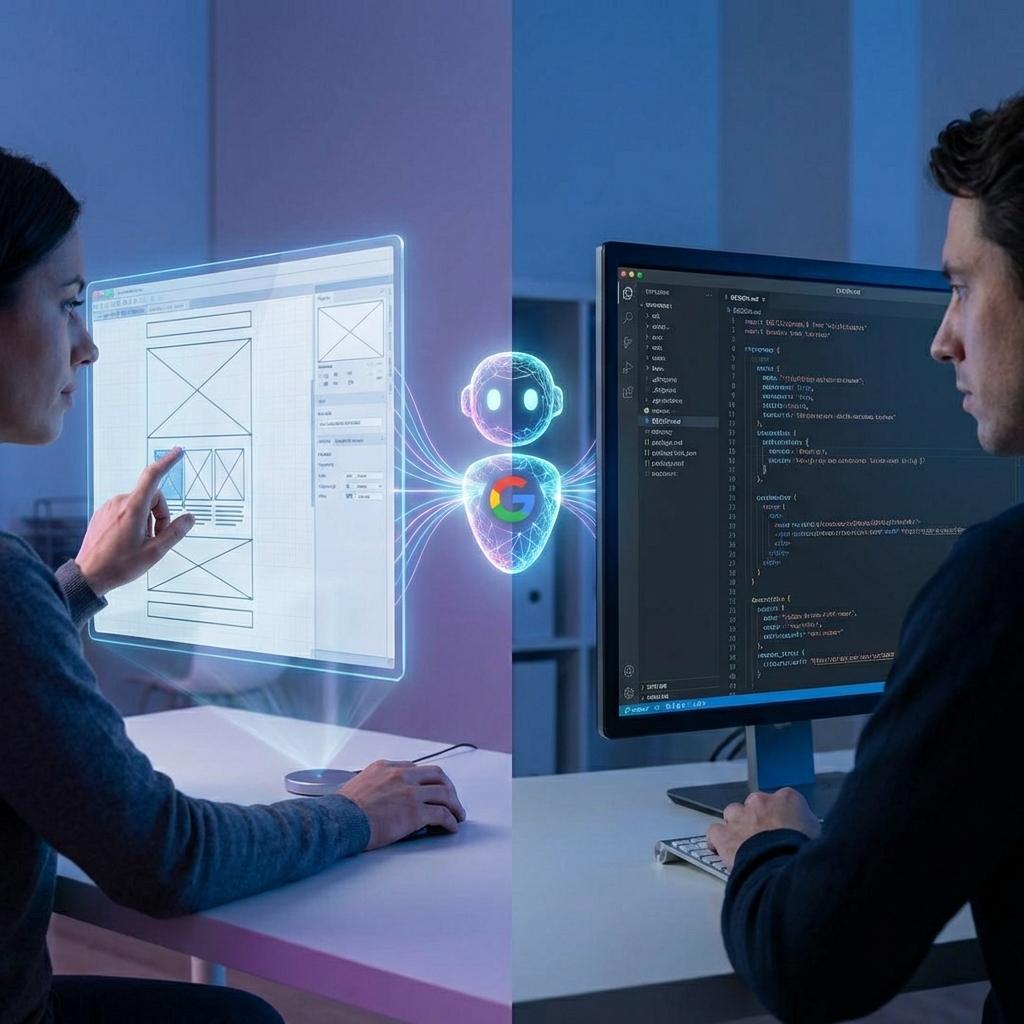

Internally at Google, this system was colloquially referred to as the "Peace Treaty." For decades, the relationship between designers and engineers has been a fraught border dispute over pixel-perfect implementation. Stitch was built to negotiate this armistice.

It achieves this through its secret sauce: the DESIGN.md file. This deceptively simple markdown document acts as the "digital DNA" of your application's aesthetic — encoding visual principles, component states, and spacing rules into a universally legible text format.

The Court of Public Opinion

We have officially entered the "Vibe Coding" era, and the cultural response has been polarized. Non-technical founders and digital influencers are obsessed. For them, Stitch represents the democratization of creation — the instant, high-fidelity realization of abstract thought without the barrier of technical literacy.

Simultaneously, developers are experiencing a profound liberation. They are celebrating the end of the "handoff" nightmare. The days of deciphering labyrinthine, disorganized Figma files with hundred-layer deep groupings are fading into a merciful obsolescence.

The Spicy Side: Controversies and Drama

The day of the March 2026 update, a palpable panic swept through the design tooling sector. Figma's secondary market valuation reportedly felt the thermal shock of what many hastily dubbed the "Figma Killer."

Beneath the market turbulence lies a deeper intellectual debate regarding "AI Slop." Critics argue these generated designs are merely pretty faces lacking cognitive depth. Do these models genuinely understand user experience logic, or are they just parroting Dribbble trends?

Furthermore, we must confront the human cost. If an AI can reliably execute the foundational 80% of a design workload, the ecosystem for junior designers — who historically learned their craft through exactly that kind of repetitive execution — is gravely threatened.

Multimedia Deep-Dive

Listen to the Stitch keynote summary and watch the engine in action.

Stitch Product Update (Audio)

The Real-time Vibe Synth (Demo)

The Road Ahead: What's Next for Stitch?

Looking at the horizon, the trajectory of Stitch suggests we have only witnessed the prologue. With the impending integration of Gemini 3.0, Stitch will gain "deep reasoning" capabilities — moving beyond static screens to architecting state-heavy logic like multi-currency, multi-tenant checkout flows.

- 1

Multiplayer AI: A shared atelier where humans and autonomous agents negotiate UI builds in real-time.

- 2

All-in-One Build: Transitioning from visual generation to full backend provisioning via Google Cloud.

- 3

Operational Synergy: Direct interface with Jira and Linear, turning design decisions into developer tickets instantly.

Picking Up the Director's Baton

When we step back to observe this epochal shift, it becomes clear that artificial intelligence is not confiscating the artist's brush — it is providing a vastly larger canvas and handing us the conductor's baton. The labor of creation is shifting from the hands to the mind, from manual execution to orchestration and taste.

The question that remains is not whether this shift will happen, but how you will respond to it.

Are you ready to articulate your vision, to step up to the podium and "vibe" your next application into existence? Or will you remain tethered to the manual geometries of a bygone era, endlessly dragging that blue rectangle three pixels to the left?