From Pixels to Projects:

The Rise of Agentic Video Workflows

We are no longer merely generating loose pixels — we are generating architecture. Explore the shift from passive automation to cognitive synthetic production.

March 29, 2026 · 8 min read

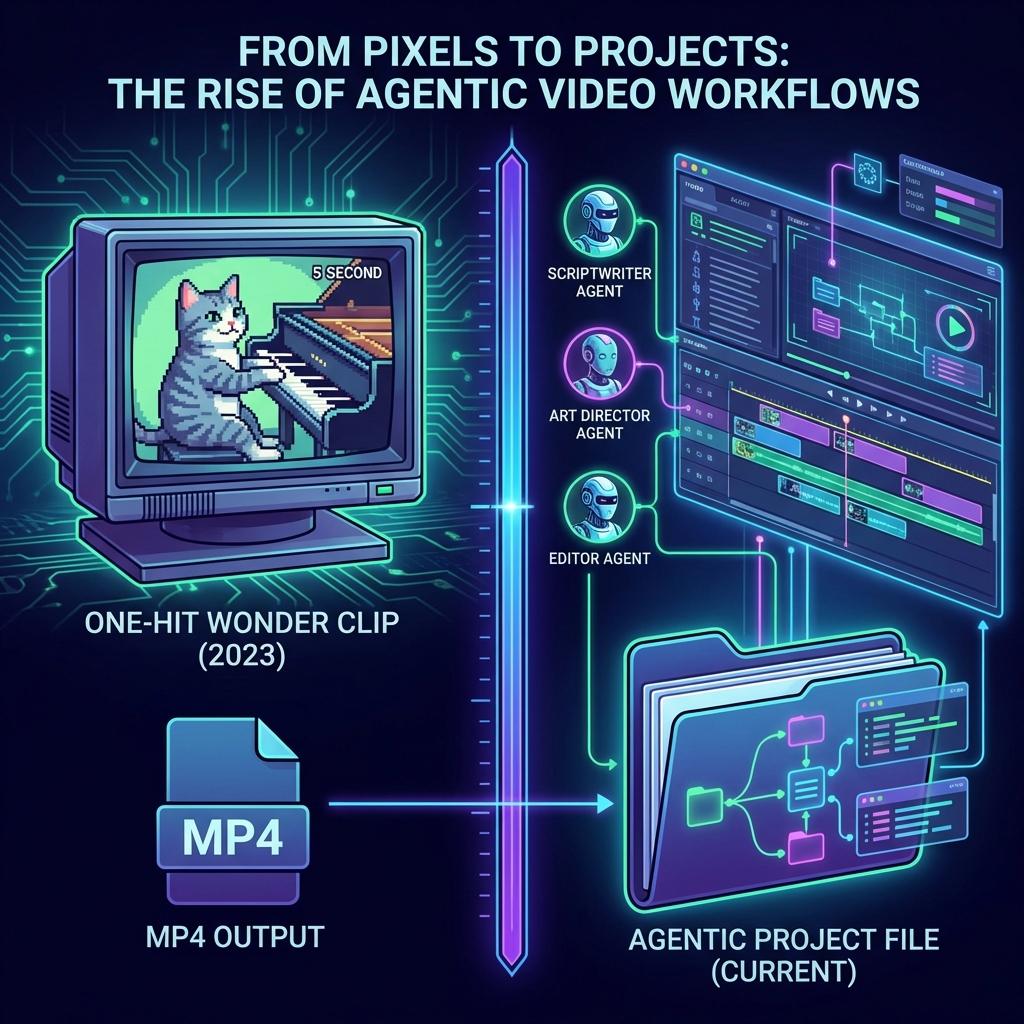

We have, perhaps mercifully, exhausted the novelty of the five-second AI-generated spectacle. The era of the hyper-realistic, temporally unstable, and ultimately hollow “one-hit wonder” clip — the cinematic equivalent of a parlor trick — is coming to a close.

What is replacing it is something far more structurally profound. We are no longer merely generating loose pixels — we are generating architecture. We have entered the era of Agentic Workflows. To understand this shift, one must stop thinking of AI as a standalone rendering tool and begin viewing it as a synthetic production crew.

We are now dealing with an ensemble of autonomous agents — a virtual Scriptwriter, Art Director, and Editor — operating in a continuous, synced loop. This gives rise to the “Prompt-to-Timeline” paradigm. From a philosophical and practical standpoint, retrieving a layered, non-linear, infinitely mutable project file is vastly superior to the flat, baked finality of an MP4. A video file is a closed door; a timeline is a room full of possibilities.

From Passivity to Agency

Passive Automation. Algorithms detected audio peaks but had no ontological understanding of narrative.

Intoxicating visuals but fragmented utility. Creators were handed puzzle pieces without a board.

Video Foundation Models parse footage as semantic “knowledge,” assembling 90% of rough cuts autonomously.

This brings us to the new creator toolkit — a fascinating blend of human curation and machine generation that is fundamentally altering how we approach the moving image. The psychological paralysis of “Blank Timeline Syndrome” is effectively eradicated; the machine provides the clay, and the human begins the work of sculpting.

Interestingly, the most sophisticated creators have adopted what is colloquially termed the “Frankenstein Workflow.” Rather than accepting a single algorithmic output, they prompt three distinctly different AI generations, meticulously stitching the best elements together. It is an act of rebellion against the homogenization of machine art — a deliberate attempt to preserve the “human soul” and idiosyncratic rhythm within the edit.

We are also seeing the formalization of temporal control through “Timestamp Prompting.” Creators are effectively writing screenplays for the timeline, dictating down to the second what must occur (e.g., [0:05] The protagonist turns; [0:10] the sky darkens). The timeline has become programmable.

Yet, as with any technological leap of this magnitude, the room is deeply divided. On one side, we hear the cheers of democratization. Solo creators and the architects of “Faceless” YouTube channels are building vast media empires with virtually zero overhead. Corporate marketing executives are equally enamored, salivating over the holy grail of “Personalized Video at Scale.”

Conversely, the groans are palpable. Professional editors find themselves in a state of existential ambivalence. They appreciate the liberation from tedious organizational tasks, but they mourn the anticipated loss of “emotional timing” — that deeply human nuance of holding a shot one beat longer than algorithm logic would suggest, knowing it will produce a visceral, gut-punch reaction in the audience.

Furthermore, we are drowning in “AI Slop.” As the barrier to entry plummets to zero, platforms are suffocating under a deluge of low-effort bot content and fake movie trailers — a grim aesthetic pollution that threatens to devalue the medium entirely. Legal frameworks are similarly buckling under the weight of automation.

Current judicial consensus dictates that if an AI agent generates the entirety of a video without sufficient human authorship, the work cannot be copyrighted. And technically, we are still battling “Identity Crisis” — the persistent, immersion-breaking phenomenon of “Identity Drift,” where an AI forgets the specific geometry of a character's face halfway through a scene.

Demonstration: Inference-Time Editing

The pixels reorganize themselves instantly, without the agonizing wait of a render.

But as we peer into the immediate future, the solutions to these technical hurdles are already materializing. We are on the precipice of “Inference-Time Editing,” a paradigm where editing becomes a real-time, conversational dialectic. Imagine simply speaking to your timeline, asking it to swap the lighting from dusk to dawn.

Similarly, the plague of Identity Drift is being solved by “Timeline Conditioning” techniques like ReactID, which mathematically anchor a character's likeness, ensuring rigid consistency from the first frame to the last.